Eyes wide shut

Think you can tell you difference between an AI photo and a ‘real’ one? Twelve months ago, yes. But now? Maybe not, but there are giveaways. That’s because AI imagery has its own visual culture – one that will develop and, in time, influence not just human-made imagery, but the physical world, too.

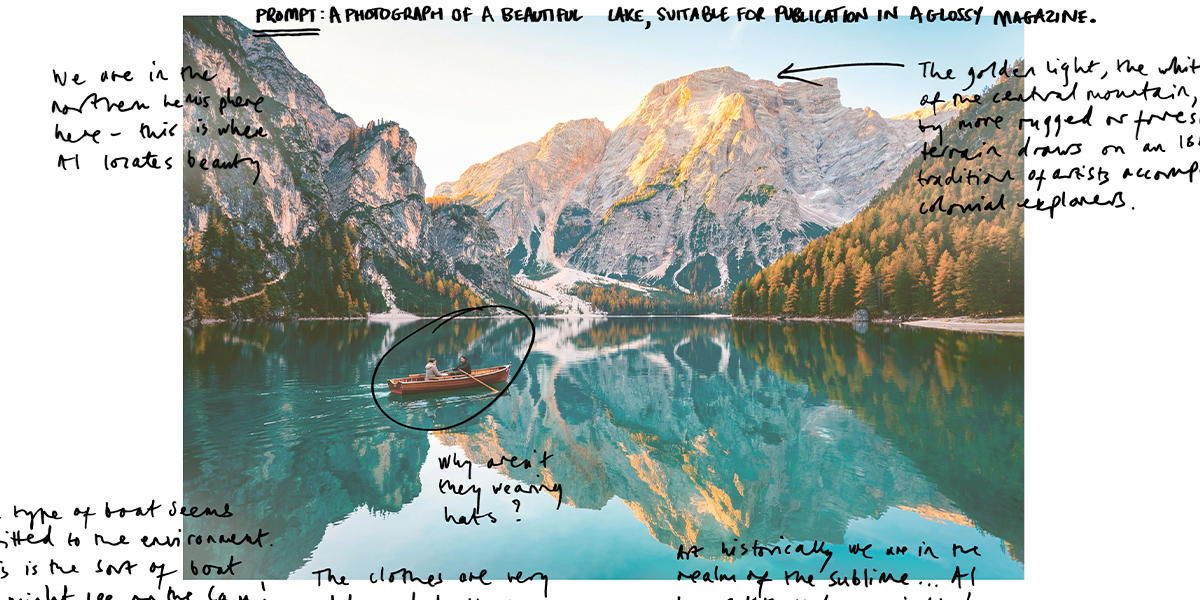

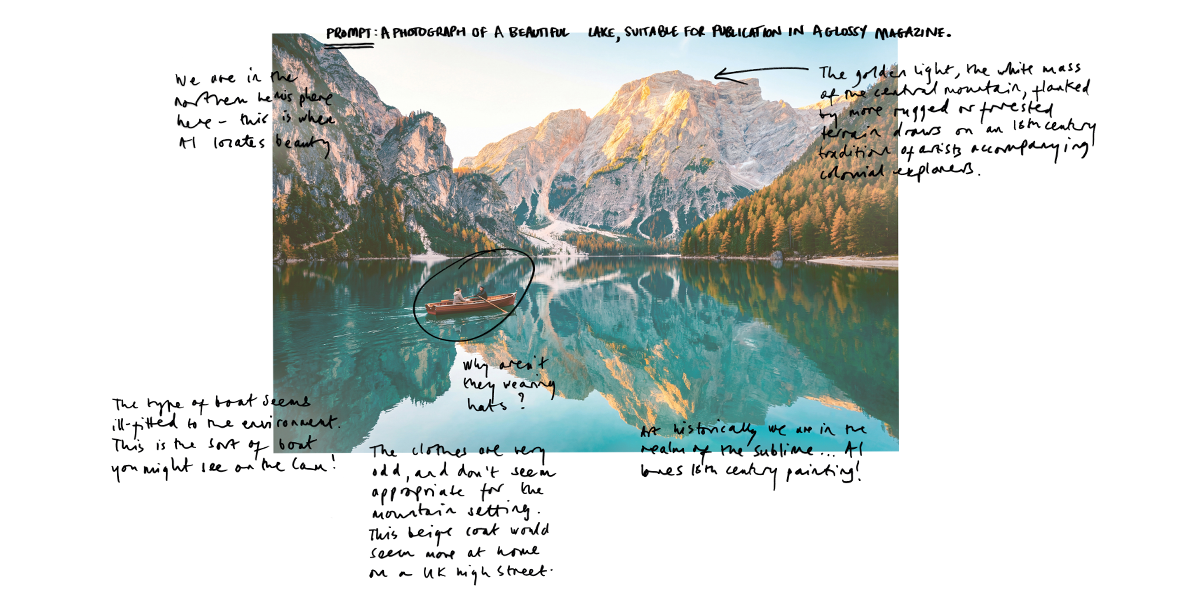

It’s 2002, you take a photograph using your new digital camera: a lake near you, all deep colours and vast, empty skies.

You post this picture on DPChallenge, a new website where digital photography enthusiasts can rate each other’s images. Everyone loves your photo. You take a few more. Then you get into something else. You forget about DP Challenge. But the internet never forgets. In 2012, researchers scrape DPChallenge. They’re interested in teaching computers about what images look the best.

They take 250,000 images, including your lake photo.

They analyse the most popular photos to understand the characteristics they share.

What do most-liked images look like? “Landscapes of wildly saturated skies or moody, high-contrast black and white feature heavily, as do animals – but virtually no people,” says Dr Leonardo Impett, Assistant Professor of Digital Humanities, whose speciality is machine learning in arts and culture.

Another 10 years and several algorithms later, this dataset that contains your lake image – along with thousands more photos the AI considers ‘pretty’ – is part of the dataset used to train something called Stable Diffusion, the most widely used AI image generator.

Now your lake image is all AI lakes, everywhere. It has become the standard, because an AI image is basically a bias engine, says Eryk Salvaggio, Gates Scholar PhD student at Cambridge Digital Humanities, whose current work examines how archival pictures are recontextualised through AI image generation.

"People aren’t thinking critically about what it means to make pictures with this tool, or how this tool is making those pictures"

“Generative AI is designed to find and produce the most common representation of an idea,” he says.

“There is a misconception that the proportions of a dataset are equal to the world’s proportions.

But when we generate 100 images of the same thing, they’ll cluster around the most common representation in the dataset, not a rational distribution of what’s in the dataset.”

And that matters, because DPChallenge – besides being a fascinating snapshot of what websites looked like in 2002, as it hasn’t ever significantly changed its design – is exceptional among image datasets, because members can share their location and biographical details.

That is how we know that most of these images were – and still are – created by middle-aged men from the US. Not for the first time, it is their aesthetic preferences that now inform the world’s aesthetic preferences. “And, to be fair, it’s not their fault,” says Impett.

“They never asked to form everyone’s AI slop. But these datasets have long memories. They’re ghosts. They have afterlives.”

Today, the ghosts of images past haunt us everywhere we go online – both when we create images using AI and when we look at those images.

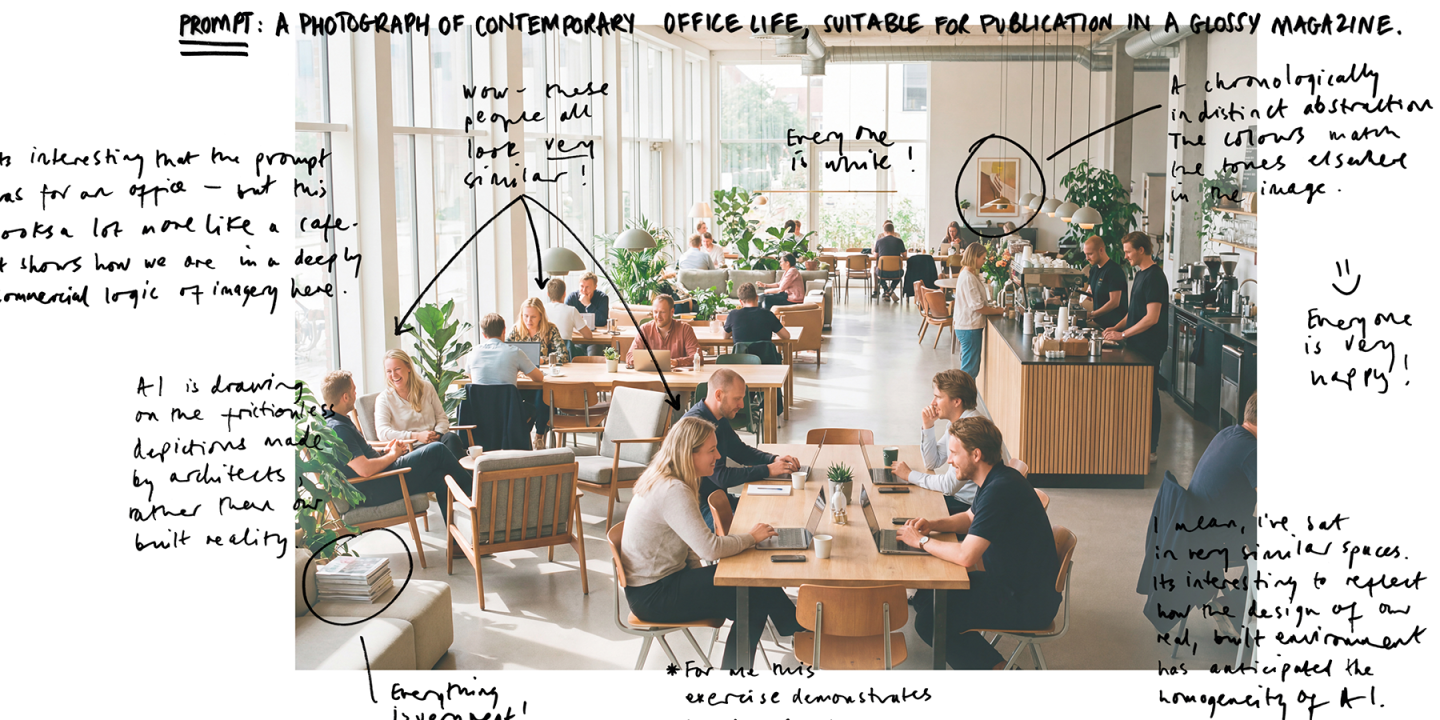

In our world of such infinite variety, why are all AI generated buildings glass and steel palaces? Why do all the coffee shops have too many plants?

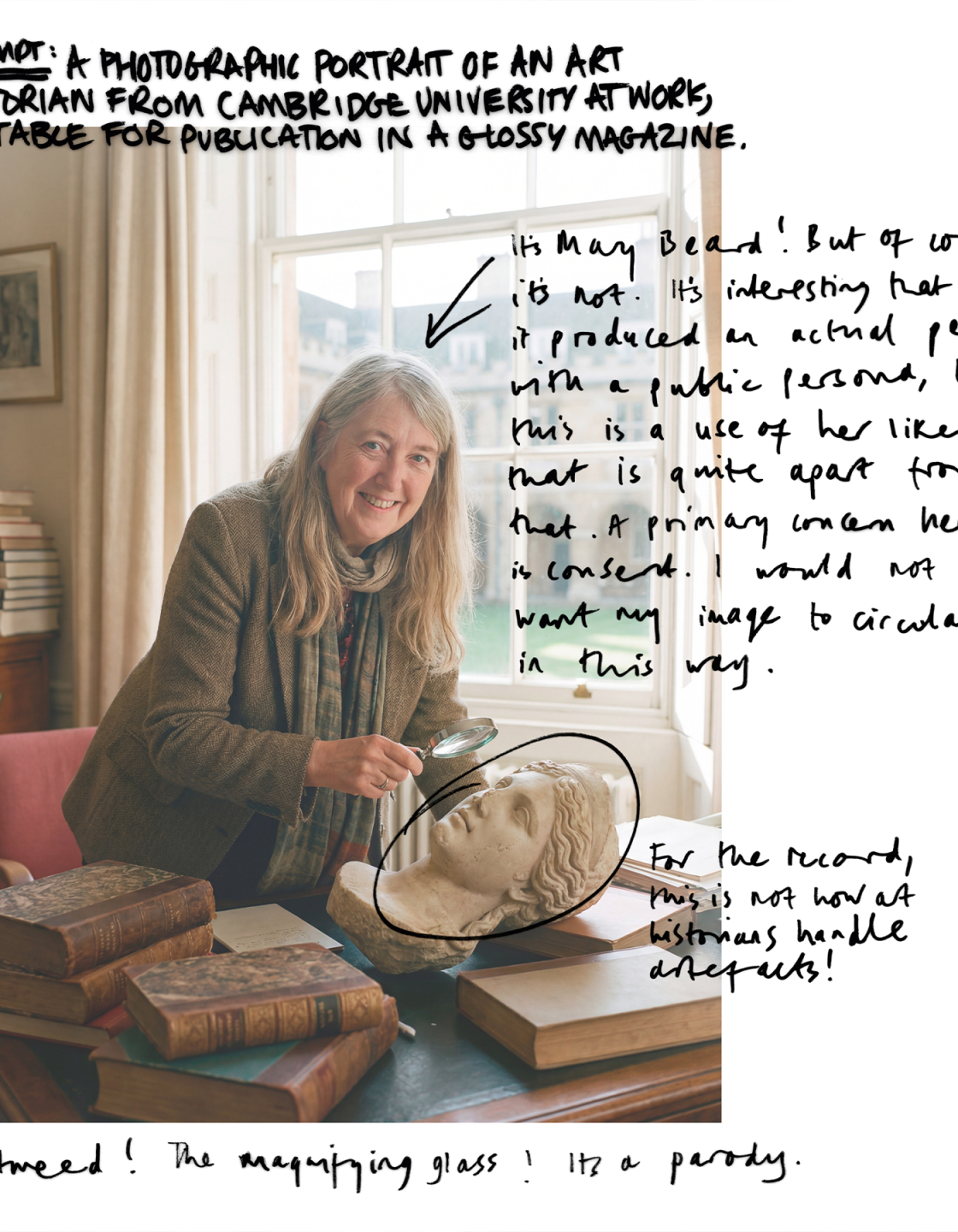

Why do all the women look like a slightly off version of Kate Middleton? There is a simple answer: because they are all trained on the same images.

“There is a misconception that these images are trained on all the images of the world,” says Salvaggio. “But they are not.

They are initially trained on around five billion images and they are not necessarily artworks. They can be photographs of products, scraped from Amazon, for example.

Then when you make a request – ‘office building’ or ‘coffee shop’ – that narrows the selection down to between 5,000 to 400,000. That sounds like a lot, but it’s a lot less than five billion.”

And that subset is chosen because these are the images on the internet that the most people have liked. This has its own issues.

So what does this AI visual culture – homogenous, bland, stereotypical, untrustworthy – mean for the real world?

That’s where digital humanities comes in. “In the digital humanities, we’re always engaged in a critique of our digital tools and their limits, and that’s what’s missing from generative AI discourse,” says Salvaggio.

“There has been uncritical adoption: ‘Oh, this thing can make pictures. Now we’ll use it to make pictures.’

People aren’t thinking critically about what it means to make pictures with this tool, or how this tool is making those pictures.”

And what happens when an AI image generator starts using the biased photos it has generated to create new images?

Then the bias could become supercharged, says Salvaggio.

“Generative AI will not find new patterns if you’re training it on its reproductions of previously identified patterns.

It could reinforce bias in not just a technical but also a social way, if it’s just reproducing the sort of people, views and objects that the original dataset represents.”

Of course, a key threat is visual misinformation.

It’s one thing to generate yet another boring image of a lake, but how should we respond, for example, to AI-generated images of war or famine?

Emmanuel Iduma is a PhD Gates Scholar at Cambridge Digital Humanities, studying the photography of two conflicts in Nigeria, the Biafran War and the Boko Haram insurgency.

He uses digital methods to explore how images of these conflicts affect how the African continent is perceived.

“We need to reflect on how actual photographs of conflict – or other horrific events – have had impacts that go beyond the moment that the photos were taken,” he says.

“Photographs of any conflict affect how we think about that place. When images are combined to create a single image, to homogenise them based on certain typologies, no distinction is made between one place and another.”

And no two wars are the same, he says – which is why the work of war photographers is still vital.

“The reason people generally have any kind of meaningful response through photographs or images is because there is some connection with the truth function of that image.

When we turn to AI to reflect or represent core elements of the human experience, it degrades what is conveyed in photographs, which is essentially human and truthful.”

But while the risk of misinformation is real, it’s not new. Indeed, photography itself is not without ethical issues.

“That’s the nature of the medium. Whenever you point a camera at something, you’re always excluding something else,” he says.

“It has always been easy to create misinformation through images. And in fact, we still see people taking older non-AI images and affixing new captions to them, to create the illusion that something new has happened.”

Indeed, Cambridge has long been a pioneer of new approaches to what history is, how it is told, and who gets to explore it.

The real challenge with AI pictures, he believes, is the sheer scale.

“You can produce millions of images. So instead of saying: ‘There was an explosion’, you say: ‘Look at all these photos of that explosion.’ How do we adapt to that scale, where it’s not a matter of one thing being wrong, but something wrong everywhere you look?”

We also need to look beyond the images themselves and focus on the technology that drives them, says Impett. Facebook is awash with AI slop videos, but not because Meta wants to replace Hollywood or big advertising agencies, he believes. It’s even more sinister than that.

“The tech companies openly admit that these tools are not to help your local furniture shop make cheaper adverts. It’s to train robots to work in warehouses, or clean kitchens – replacing low-paid workers in rich countries.

AI systems need many, many goes at doing a particular thing. You can’t just show a robot a dirty kitchen once. It needs to move around, interact. You need, say, a million dirty kitchens, or a video simulator of a million dirty kitchens. This is not my fringe conspiracy theory, by the way.

This is absolutely what the big tech companies are saying they are doing.”

And, of course, these videos have also been trained on limited sets of images – which could bring a whole new set of problems. Impett cites the Platonic Representation Hypothesis: the theory that all AI models are gradually becoming more similar in their behaviour.

“Having video generation models trained on images that make something alluring and perfect might become a technical challenge.

How will a robot that’s been trained how to act in a perfect kitchen react when faced with a kitchen in the real world? I’m highly sceptical of the idea that AI models are converging to some deeper truth, but the overall observation – that the convergence is happening – is interesting.”

So where do we go from here? “I do believe that people want to see photographs of experiences that people have had or places that they’ve been, or see art that humans have made,” says Salvaggio. “They want to participate in a human conversation through art and writing and music. They don’t just necessarily want this kind of… wallpaper.”

If AI art somehow comes to a point where it can transcend the wallpaper, it will be because it is carrying a unique fingerprint of a human being who’s making it, he says.

But that’s a hard thing to create, simply because of how these systems are designed. “I think the current way these tools are built strips out the human touch.

Because I use AI in art, in ways that show these limits, I do think you can engage with them critically, and reveal the biases and politics embedded in the machines.

But I have to work against the interface to do that, where the emphasis is on ‘produce, produce, produce’ as much as you possibly can.

Make a thousand pictures. Make 100 songs. I think people are going to get very tired of that very quickly.”

Then it will be time for a new tool, he says: one that enables a way of making that expresses something other than a common denominator or an average – a tool that centres human expression and draws from an ethical dataset.

“It would have to be a real tool, rather than a content stream. That would be a radical departure from what we have now.

There might be a real space of opportunity there. It is possible but it is not what’s being prioritised.”

Until that time comes, if we want to create something entirely new, it seems the old ways may be the best: stop looking at pictures dreamed up by a machine, and search your own imagination instead.

Dr Inga Fraser is Senior Curator at Kettle’s Yard and Co-Director of Cambridge Visual Culture, exploring the intersections of modern art, emerging media and broader visual cultures.

If you would like to support future research in Cambridge Digital Humanities, please contact Kara.Rann@admin.cam.ac.uk or visit cam.ac.uk/give-cdh

CAM

CAM